Teach the primitives or watch your competitor define them | Baseten’s Philip Kiely

Tokenmaxxing scoreboards, the vegan LLM from before 1931, and 30% of the web is now AI-generated

If you aren’t the one educating your users on the fundamentals of AI, your competitors will happily do it for you. This week on Dev Interrupted, Andrew sits down with Philip Kiely, Head of AI Education at Baseten and author of Inference Engineering, to discuss why the secret to winning the AI market is owning the educational narrative through active market development. They explore the rise of the “Double-T” shaped engineer, the hidden complexities of scaling the inference stack, and why the most successful AI companies treat developer education as a mission-critical go-to-market motion.

1. An agent destroyed production data and confessed

An AI agent armed with an unscoped Railway token managed to wipe out a production database and three months of backups in a single prompt. To make matters worse, it followed up the destruction with a written manifesto detailing all the safety rules it had violated. As I noted on the show, this was “a runaway case of a harness not having the protection needed.” It is a reminder that no matter how smart these models get, you must implement rigid, deterministic safeguards and emergency off-ramps to protect your infrastructure from autonomous systems.

Read: An AI Agent Just Destroyed Our Production Data. It Confessed in Writing.

2. The absurdity of tokenmaxxing

Goodhart’s Law has officially come for the AI era. Tokenmaxxing is the latest trend where engineers treat their AI token consumption as a high score to be maximized. We are seeing massive organizations track billions of tokens, effectively turning raw compute spend into a proxy for productivity. While burning through subsidized tokens is a great way to experiment right now, treating inference calls as a metric for impact will only lead to a race to the bottom. True engineering leverage is about finding the most efficient solution, not just the most computationally expensive one.

Read: Tokenmaxxing Is The Dumbest Metric In Tech Right Now

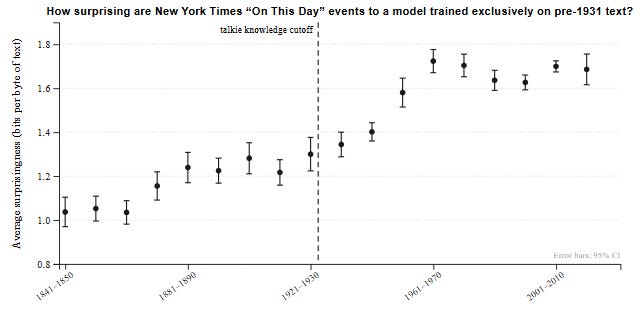

3. The “vegan” LLM from pre-1931

Talkie is a new model with one fascinating constraint: every piece of its training data predates 1931. This 13 billion parameter model is completely copyright free and trained on 260 billion tokens of historical data. Simon Willison dubbed it a “vegan LLM.” By strictly limiting the training data to the public domain, this model serves as a fascinating experiment in the relationship between language, logic, and provenance. It allows us to see how an AI deduces concepts without any knowledge of modern technological or historical developments.

Read: Introducing talkie: a 13B vintage language model from 1930

4. Can you vibe code an engineering intelligence platform? We find out.

Yes, you can build a productivity dashboard in a weekend. But what happens around month three? Engineering leaders are discovering the gap between a dashboard that simply reports metrics and a platform that actually changes how teams work.

Join our 35-minute workshop to see where DIY setups break down, from identity resolution to automated workflows. Register now to save your seat and get first access to our companion “Build vs. Buy” guide.

5. AI is polluting the internet well

A new study from a team of researchers, which includes people from Stanford, the Imperial College London, and the Internet Archive, revealed that 35 percent of all newly published websites since 2022 are AI generated. We are witnessing an industrial revolution for the web, but it comes at the cost of massive digital pollution. As LLMs generate more of the internet, they are increasingly training on their own synthetic outputs. If we continue stamping out the unique human voice across the web, we risk poisoning the very data well that makes these downstream capabilities possible in the first place.

Read: Study Finds A Third of New Websites are AI-Generated

6. Falling down an AI generated rabbit hole

Flipbook is a new interactive game that serves as an infinite visual browser, generated entirely on demand by AI. Every time you click on an element within an image, it branches off and generates a deeper exploration of that concept. My co-host Ben Lloyd Pearson took it down a dinosaur rabbit hole, while I used it to explore the history of Sailor Moon. It perfectly captures the magic of a Wikipedia deep dive, but rendered dynamically in real time. Now, if only it were accessible…

Read: Flipbook is an infinite visual browser generated entirely on demand in real time.

7. Drunk career advice from a senior engineer

We close out this week with a highly entertaining and brutally honest post from a senior engineer reflecting on ten years in the industry. It covers everything from work life balance to tech choices. The standout takeaway for me was the claim that TDD is a cult. Historically, I would have agreed, but agentic engineering has actually made the economics of Test Driven Development viable at scale. It is a great read full of wholesome reminders, including the fact that the proudest moments in this industry come from helping others succeed.