Scaffolding is coping not scaling, and other lessons from Codex | OpenAI’s Thibault Sottiaux

How Block reached 95% AI adoption, the end of manual syntax, and GPT-5.2.

If you rely on complex scaffolding to build AI agents you aren't scaling you are coping. Thibault Sottiaux from OpenAI’s Codex team joins us to explain why they are ruthlessly removing the harness to solve for true agentic autonomy. We discuss the bitter lesson of vertical integration, why scalable primitives beat clever tricks, and how the rise of the super bus factor is reshaping engineering careers.

1. How Block reached 95% AI engineering adoption

Block has moved past the experimental phase and firmly into the “Gas Town” era of engineering. Angie Jones reveals (she was on Friday’s episode!) that most of their teams are operating at a stage 5 or 6 on the maturity scale, thanks largely to a focus on resilience. When an AI tool hallucinates or produces slop, Block engineers don’t abandon the tool; they lean in to engineer the context required to fix it. By focusing on “repo readiness,” creating specific rule files and context documents for every project, they have turned AI from a novelty into a reliable teammate that handles the heavy lifting across iOS, Android, and backend systems.

Read: AI-Assisted Development at Block

2. Era of humans writing code is over

Ryan Dahl, the creator of Node.js and Deno, recently stated that the era of humans writing code is effectively over. We agree, but with a caveat. While the manual act of typing syntax is becoming grunt work, the discipline of engineering is more vital than ever. Coding by hand now feels like a waste of time compared to the leverage gained from managing 10 agents running in parallel. The job description is shifting from “writer of code” to “orchestrator of solutions,” where the primary challenge is managing the handoffs between multiple AI agents to compress two weeks of work into a single day.

Read: Era of humans writing code is over

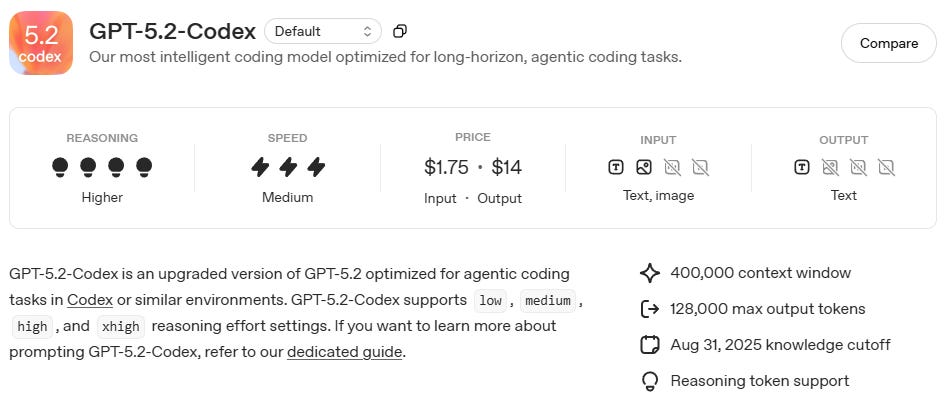

3. The end of model monogamy

OpenAI just threw a massive wrench into the “Claude is king” narrative. With the release of GPT-5.2-Codex, they have placed themselves back neck-and-neck with Anthropic’s flagship models. For developers, this reinforces a critical lesson: don’t marry a single model. Ben Lloyd Pearson describes ChatGPT as his “daily driver” for reliability, while reserving Claude for complex builds and Gemini for massive data reasoning. To maximize velocity, engineers need to treat these models like tools in a belt, rotating through them to find the specific strengths required for the task at hand.

Read: OpenAI releases GPT-5.2-Codex in Responses API

4. CES 2026: Hypersonic knives and musical lollipops

CES is often a mix of genuine innovation and what can only be described as “physical vaporware” - concepts that feel like software bugs manifested in plastic and metal. From lollipops that conduct music through your teeth into your ear, to “hypersonic knives” straight out of Dune that slice vegetables via vibration, the hardware world is getting bizarre. While many of these gadgets may never hit the shelves, they highlight a creative explosion in product design, likely fueled by the same AI acceleration we are seeing in software.

Read: The weirdest tech of CES: It gets very weird, very fast

5. What does elite engineering look like in 2026?

LinearB analyzed 8.1 million pull requests across 4,800 engineering teams to find the answer. In this on-demand workshop, leaders from CircleCI, Apollo GraphQL, and LinearB break down the results.

You’ll get a clear view of the market with 20 core SDLC metrics. Plus, three brand-new AI metrics designed to show you exactly how AI tools are impacting delivery velocity and code quality. No fluff. Just data. Watch the workshop to see how your team stacks up.